Deliver the experience today’s shoppers expect

Use real-time insights and proven solutions to improve page speed and site performance to deliver the superior digital experiences that drives higher conversions and shopper satisfaction.

Fast

Provide a faster, convenient shopping experience on the journey from browsing, to buying, and buying again.

Personal

Every shopper who interacts with your brand gets that VIP feeling of a personalized experience.

Secure

Shoppers can trust your brand with their financial and personal data.

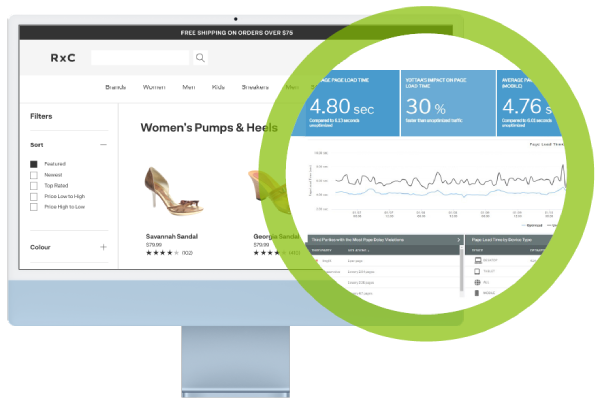

Site speed matters now more than ever

Pages taking >4s to load result in >60% bounce rate. Rich, complex digital elements drive the lag time. Improve site speed by optimizing the loading of content and sequencing of third-party apps.

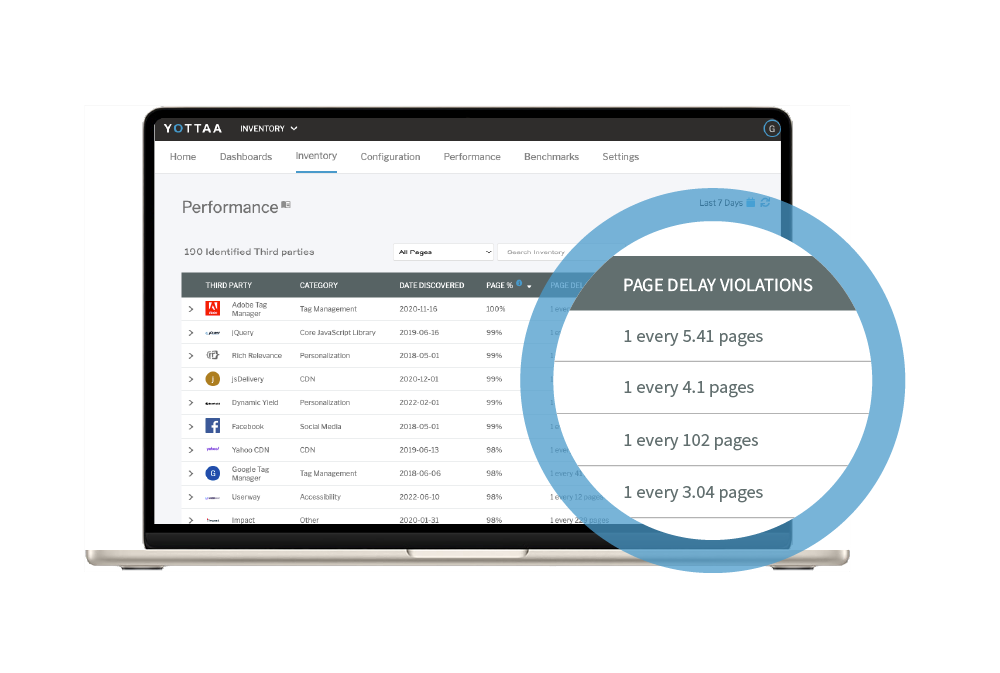

Take control of site performance

Gain complete visibility into third-party apps and critical metrics including Core Web Vital metrics to analyze and make real-time improvements that keeps your site running at peak performance.

See immediate results

You’ve invested a ton of time and money in your website and the marketing campaigns driving customers to it. Yottaa helps increase your ROI on those investments. With a low technical effort, you’ll see an incremental lift in revenue from improved conversion rates from a faster site.

Our customers have seen results like:

30%

Increase in site speed

10%

Conversion rate increase

Are slow loading pages costing you conversions?

Find out with a Site Speed Snapshot™.

Resource center

2024 Annual eCommerce Tech Buyers’ Guide

Get the report to understand which third-parties are impacting your site performance and what you can do to improve it.

Are slow loading pages costing you conversions?

See how Yottaa can help you optimize site performance in real-time for superior shopper experiences and higher conversions.

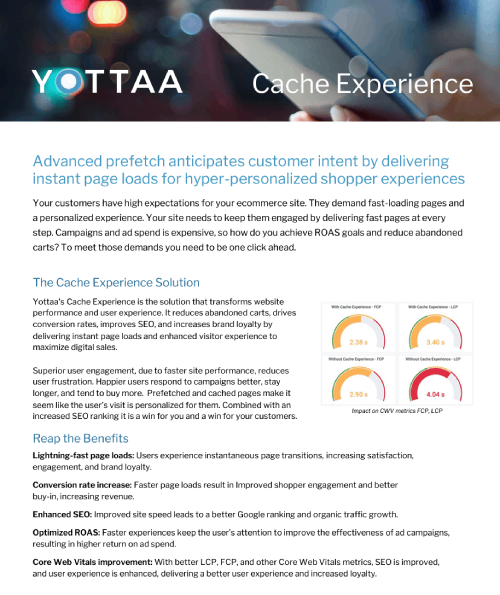

Yottaa’s Cache Experience

Cache Experience enables brands to deliver fast and engaging digital experiences that keep shoppers on your site and turn them from browsers into buyers.